Thursday, February 13, 2014

SharePoint 2013 Migration: Stress Free! (SharePoint Federal User Group in Ottawa presentation)

Actions:

Wednesday, June 05, 2013

Choosing and Using Cloud Services with SharePoint

Here’s a copy of my presentation for the SharePoint Summit 2013 in Toronto. I spoke about tips and tricks for evaluating and managing cloud services with SharePoint, including some common gotchas and considerations.

Because it was such a wide-ranging topic I tried to anchor it with the story of StoneShare’s own journey to the cloud. I like to keep my presentations “real world”

I hope this is of value to someone – please feel free to contact me on LinkedIn if you have any questions about it.

Actions:

Thursday, April 25, 2013

SharePoint Summit Toronto 2013

I’ll be speaking at the Toronto SharePoint Summit 2013 again this year. My topic is “A No-Hype Approach to Choosing and Using Cloud Services with SharePoint”.

I’ll be doing a really-practical deep-dive into SharePoint and related cloud services. I’ll talk about best practices, issues, opportunities and risks for using cloud-hosted business and infrastructure services such as Office 365, Dynamics CRM, Yammer, CloudShare, and other popular offerings, with SharePoint. I am putting together a lot of real-world examples and facts that we have found at StoneShare. It’s going to be wide-ranging and cover compliance issues, branding and user experience, popular service offerings, integration and platform decisions, up-front and hidden costs, and business and IT benefits. Phew!

The good folks at Toronto SharePoint Summit also want to get the word out – so if you are interested in attending the conference, come to SharePoint Summit 2013 – Toronto.

Here’s a blurb on the event:

This year in Toronto, there is an exceptional speaker lineup with some of the top industry known SharePoint influencers and MVPs including Andrew Connell as the keynote speaker.

Benefits for your organization include:

- Learning about the SharePoint 2013 platform and its new features

- Understanding the power and potential of SharePoint

- Discovering and exploring the options for deploying SharePoint in the Cloud

- Improving your understanding of information architecture

- Understanding key SharePoint modules and how they can support solving your business problems

- Cases studies of companies that have implemented SharePoint solutions

- Discovering the best development approaches when dealing with SharePoint

You can register here. Hope to see you there!

Actions:

Wednesday, October 05, 2011

SharePoint Conference 2011: Using Claims for Authorization in SharePoint 2010

Rough notes of the presentation by Antonio Maio at Titus Inc. Antonio walked everyone through a complicated Claims setup and made it look pretty easy.

Agenda

- What are Claims?

- How they are used in 2010

- Enabling Authorization through claims

- Customer requirements and scenarios

- Infrastructure and Architecture

- Demonstrations

- Benefits and Goals

What are Claims?

User attributes

Metadata about a user

AD Attributes / LDAP Directory Attributes

But really it’s an assertion I make about myself – “I’m a senior product manager” – and claims can be believed if they are backed up by a trusted identity provider.

Allows us to solve problems like federation and complicated authentication schemes

Deciding what we can see and do not only based on who we are but on our clearances or the type of data, or even if we connect via a secure connection and so on.

Examples – Claims about Antonio

- Name: Antonio Maio

- Department: Product Management

- Security Clearance: Secret

- Employment Status: FTE

- Country of Birth: Canada

- ITAR Authorized: No

How Are Claims Used In SharePoint 2010?

- Authentication

- Single Signon across systems across domains

- Maintain End User privacy (you can configure who can see what)

- Authorization

Claims for Authentication in SharePoint 2010

New option in SP2010

Allows: Claims Based, Classic Mode (Windows), and Forms Based – must be configured via claims

Using Claims – authorization can be specific to the user. Can be dynamic – ex changes in security clearance. Consider environmental attributes (ex current time, geo location, connection type, etc).

Enabling Authorizations Through Claims

Infrastructure and configuration has to be considered. Where are you going to store, manage, and retrieve claims.

Planning is required – policies

Development required or 3rd party applications. Native SharePoint 2010 functionality is manual. Use WS-Trust and WS-Federation to retrieve and validate claims. Design apps to verify specific required claims only – remember privacy.

Customer Scenarios

How do customers want to make use of Claims?

Document Metadata + User Claims

Ex Document classification and a user’s security clearance

Goal: Sensitive content sitting beside non-sensitive content

Policies and rule-based system that determines access control

Automation is critical and policies are simple to start.

"I believe you shouldn’t let security policies dictate where you manage your content”.

Keep policies simple to start and let the business drive new requirements.

Customer Scenario #1:

Claim: Employee Status.

Document Metadata: Classification (High Business Impact,Moderate Business Impact, Low Business Impact)

If employee.status=FTE and document.classification = HBI them PERMIT access to document

If employee.status=Contract and document.classification = HBI then DENY access to document

Customer Scenario #2:

Claim: Group membership

Document Metadata: Project

If user belongs to GroupX and belongs to GroupY and document.project=”eagle” then PERMIT access to document

If user belongs to Groupx and DOES NOT belong to GroupY and document.project=”eagle” then Deny access to document

Customer Scenario #3:

Claim: Client Case Numbers

Document Metadata: Document Case Number

If document.case=X AND client.casenumbers includes X then PERMIT access

If document.case=X AND client.casenumbers DOES NOT include X then DENY access

Infrastructure and Architecture

Client Web Browser talking to SharePoint:

1. User login (with user name and password)

2. SharePoint requests token from Secure Token Server (ADFS v2 is an example)

3. ADFS2 wants to get claims about the user

4. Packages these claims up and signs them. Because there is a trust relationship set up between SP2010 and the claims provider SharePoint will trust this package

5. Then SharePoint does something with this claim token for authorization. SharePoint is the Relying Party application (it is RELYING on the trusted identify provider (ADFS in this case) for the claims

Demo

AD is running on its own W2008 R2 server. Using default schema, using OrganizationalStatus attribute

Setup

1, ADFS v2 Configuration – installed as Federation Server and IIS has self=signed certificate

2, Use Wizard to create new Federation Service in IIS – note the Federation Service Name.

3. Add a Claims Description – note the claims type URL

EmployeeStatus added by Adding Claim Description – uses this URL – http://schemas.sp.local/EmployeeStatus in the example and sends Claim to calling application

4. Add Relying Part Trust – selected WS-Federation Passive Protocol. Relying Part URL = “Federation Service Name” + “/_trust/”

Relying Party Trust Identifier – urn:ServerName:application – urn:sp-server-2010.sp.local:sharepoint2010

5. Create Claims Rules for the Relying Parties. Add SharePoint 2010 trust rule – use templates – Send LDAP attributes as Claims which allows us to map LDAP mappings to claims.

ex LDAP attribute: mail maps to E-Mail Address outgoing claim attribute

LDAP attribute: organizationalStatus maps to EmployeeStatus which we created earlier

7. View and export the ADFSv2 Token Signing Certificate = c:\adfs20Certificate.cer

Transforming Claims – Claims Rule Language

example: send custom claim called “EmployeePermission” with the value of Full Control if the user belongs to the SeniorManagement group and if the value of the employee’s organization attribute in AD is “Titus”

http://technet.microsoft.com/en-us/library/dd807118%28WS.10%29.aspx

SharePoint 2010 Configuration

1. Create a new web app in central admin. Use Claims Based. Use NTLM to start. Ensure public URL matches the one in the ADFSv2 certificate – trust between this web app and the ADFSv2 server

Do not create a site collection yet

2. In IIS, setup SharePoint Site to use SSL

3. use powershell to map the claim types in SharePoint

Have to run new claims using Powershell

Will be provided on Titus.com blog where the information will be available on these steps.

4. In Central Admin, access authentication providers and check Trusted Identify Provider and then check next to the ADFSv2 Provider you added. Normally you would remove NTLM

5. Create your site collection.

6. Create sites and libraries.

Authorization Policies

Questions you need to ask:

- Which policies are right to protect the business?

- Which user attributes are important? Are you using AD or LDAP or something else?

- Which content items or content types are important?

- Which policy language do you need? (XACML, SECPAL, etc)

Tip: Keep it simple

Titus Demo

Install Metadata Security product as a Farm Solution

Apply rules to items or folders

Created 3 rules – High Business Impact, Moderate business impact, Low business impact.

Showed how Bob could log in as a contractor and not see HBE docs, could read Moderate impact, and could edit low business impact.

Then changed Bob in AD to be a Full Time Employee, and now Bob had full control over everything.

Goals and Benefits of Titus Metadata Security

Benefits:

- Security is automated

- Security is consistent

- Data Governance and Compliance Policies are fine grained.

Summary

Authentication and Authorization are different but both important. Use Claims today in SharePoint 2010.

Infrastructure and Planning is required

Plan policies with business stakeholders – Keep Simple to Start!

Actions:

Saturday, September 24, 2011

SharePoint Data Governance: Achieve Consistent & Automated Security Webinar Invite

Next Friday September 30 I will be co-presenting a live webinar on SharePoint Data Governance with Microsoft and our partners Titus. I will be speaking to some real world examples of handling SharePoint security and regulatory compliance challenges using SharePoint and Titus Metadata Security.

The amount of data - and sensitive data - is growing within SharePoint environments every day, but is your organization set up to keep it all secure?

Date: September 30, 2011

Time: 12:00pm EST

Speakers:

Dr. Soheil Saadat

Principal Program Manager

Microsoft

Nick Kellett

Microsoft MVP & CTO

StoneShare

Antonio Maio

Senior Product Manager

TITUS

You can find out more information on our StoneShare.com website here:

Actions:

Tuesday, April 26, 2011

SEO Tool for SharePoint 2010 WCM Sites

There is a great tool from Mavention called Meta Fields that allows SharePoint 2010 administrators to add metadata properties to the source code of their pages. This makes it easier for a search engine to crawl and rank the content and so is helpful for Search Engine Optimization (SEO).

To use this you just have to download (for free as far as I can tell) and install Mavention Meta Fields which is a SharePoint solution. After activating the feature and adding metadata columns to your page content type, you can now edit the properties and the source code spits out metadata such as (for example) keywords, subject, DC.title and DC.keywords.

Anyone with a public website SharePoint 2010 web site should consider using this. Complete instructions are on creator Waldek Mastykarz’s blog:

http://blog.mastykarz.nl/easy-editing-meta-tags-publishing-pages-mavention-meta-fields/

Great solution Waldek!

Actions:

Friday, April 22, 2011

Managed Metadata Term Set Tools

One of the great new features of SharePoint 2010 is the new Managed Metadata service, which allows you to centrally manage your metadata. You can setup hierarchical terms in a variety of languages, and delegate the administration responsibility to end users such as Information Management staff or Records Managers.

Although it is easy enough to add individual terms, there are a variety of great tools that make it easy to create and upload entire term sets (the largest we have migrated so far is Medical Subject or MeSH data with about 16,000 hierarchical medical terms).

Here are some of the tools we use at StoneShare:

Excel Template with Macro

Wictor Wilen has created an Excel Template with a Macro to make it easy to populate SharePoint 2010 Term Sets.

I have uploaded the file to the Agora Development site under Shared Documents > Development Tools: https://agora.stoneshare.com/dev/Shared%20Documents/Development%20Tools/TermStoreCreator.xltm

The instructions are located here:

http://www.wictorwilen.se/Post/Create-SharePoint-2010-Managed-Metadata-with-Excel-2010.aspx

Term Set Importer / Exporter

When uploading large term sets (such as the MeSH one we used for a client) you might get timeouts in Central Administration – this is because the out-of-the-box import tool uses a web interface). There is a great (FREE!) tool on CodePlex which uses a little desktop utility, and hence does not time out:

http://termsetimporter.codeplex.com/

Pre-Built Term Sets for Sale

Rather than going to the trouble of creating your own term sets, you can also purchase existing term sets from Data Facet. I haven’t yet used these so I can’t speak for them but this could be a good way to quick-start your managed metadata for a particular industry.

http://www.datafacet.com/sharepoint.aspx

One advantage of this approach (apart from the time saved recreating these) is that you will be using the identical term set as other organizations, which may help with data portability. Whether this is your business requirement or not is an open question.

I hope these tools help!

Actions:

Thursday, December 30, 2010

SharePoint 2010: People Search Results Not Showing on SSL secured portal

We started using SSL on our company portal at StoneShare and the people search results stopped showing up.

After checking the log files, we realized this was caused by a top level error where the sps3:// protocol handler could not browse to the my sites anymore. Essentially due to the new SSL address the default people search handler could not find the My Sites.

The fix is to modify the search content source crawl addresses to use change the following:

sps3://[root level address]

to this:

sps3s://[root level address]

The extra “s”in the address simply tells SharePoint to use the SSL protocol when the people search index is being built on that content source.

Hope that helps,

Nick

Actions:

Monday, November 08, 2010

SharePoint 2010 Error: Document ID Not Set

Had an issue on a client machine where although Document IDs were enabled on the site collection, no IDs were being applied to the uploaded files.

SharePoint assigns these IDs as part of a timer job that runs in Central Administration. To fix this you will need to run the following two jobs:

Document ID enable/disable job

and

Document ID assignment job

for each site collection where this is not working. You can view the timer jobs in Central admin from this page:

/_admin/ScheduledTimerJobs.aspx

Hope that helps!

Actions:

Wednesday, September 01, 2010

SharePoint 2010: Search Service is not able to connect to the machine that hosts the administration component

Had a strange Search error in a new SharePoint 2010 farm:

The search service is not able to connect to the machine that hosts the administration component. Verify that the administration component 'bae8d161-c132-4570-aa0d-57baf6cf9d9b' in search application 'Search Service Application 1' is in a good state and try again."

I was unable to start any crawls as a result.

The search services were all started correctly but in the Application Services window it showed “Error”. The fix for me was to click on the Search Service Application name in the list of Application Services, choose Properties on the ribbon above, and change the Application Pool to another one (in my case, from SharePoint Web Services Default app pool to SharePoint Web Services System).

Hope it helps someone.

Actions:

Tuesday, July 20, 2010

SharePoint Workspace 2010 “exceeds the lookup column threshold”

While trying to sync to a SharePoint site using SharePoint Workspace 2010, you may bump into the following strange error: "The query cannot be completed because the number of lookup columns it contains exceeds the lookup column threshold"

This is due to SharePoint’s new resource throttling settings for managing large lists. There is an easy fix in Central Administration.

Go to Central Administration and then browse to Application Management > Manage Web Application. Select the web application you need.

In General Settings choose Resource Throttling. Set the value in the List View Lookup Threshold textbox to a higher value (equal to or greater than the number of site columns you are using on the list where you saw the error).

I bumped into this with Workspace but this error may appear for other client applications / systems that use SharePoint 2010 as a platform, and are trying to lookup lists that have more site columns than the throttle setting.

Actions:

Tuesday, March 16, 2010

SharePoint 2010 Migration Seminar

In my new role as Chief Technical Officer at StoneShare Inc., a Canadian SharePoint solutions firm, I am currently working on SharePoint 2010 migration options, and will be presenting a seminar on migration at several different events over the next month.

This topic will be focused on administrators and business users who want to understand migration paths and how best to prepare for a 2010 move.

I will do a live demo of an in-place SharePoint 2010 Upgrade - not for the faint of heart :) - and discuss the various options, useful tools, what sort of planning is required, and actual technical steps to get a great migration result.

I’ll be publishing my slides once the seminar is complete. Here’s an overview of the demonstration:

Migrate to SharePoint 2010 - Stress Free!

Considering migrating your current SharePoint environment to SharePoint 2010? Worried about what’s involved and how to manage it? Don’t let it become a headache! This presentation will discuss some common sense business and technical approaches to take away the pain, and help you deliver your SharePoint 2010 migration project on time and on budget.

Topics Covered:

- A little history: The SharePoint 2003 to 2007 Migration experience

- Common Migration Pains

- SharePoint 2010 Technical Changes

- Governance

- Migration Options

- Migration tools and utilities

- The Migration Process

- Recommendations

I will be presenting at the following events:

SetFocus SharePoint 2010 Seminar

This is part of a four-part series of seminars on SharePoint 2010 that SetFocus is putting on.

When: Thursday, March 18 at 1 – 4 PM PST

Where: Online webinar. You can register for free here:http://www.setfocus.net/marketing/spseminarseries.aspx

Microsoft Ottawa Federal SharePoint User’s Group

This is the monthly SharePoint user group – it is definitely open for more than just Federal SharePoint Users to attend!

When: Tuesday, March 30 at 5 – 7 PM PST

Where: Ottawa at the Microsoft Office on 100 Queen Street

SharePoint 2010 Summit

When: April 12 to 14

Where: Centre Mont-Royale, Montreal

Register online now for this great SharePoint conference in one of the world’s great cities.

http://www.sharepointsummit2010.com/index_e.htm

There’s really a lot to discuss and I’m looking forward to the seminars. I’m also crossing my fingers the live upgrade goes as planned each time :)

Actions:

Friday, November 06, 2009

SharePoint 2010 Likely To Offer App Store

This just in from ReadWriteWeb:

Microsoft will offer an application marketplace within Sharepoint 2010 that will integrate with third-party applications from its partner network. No date has been set for the marketplace lauch but it will evolve from "The Gallery" a feature that provides Sharepoint 2010 users access to templates…

Details are few about the application marketplace that will be offered through Sharepoint. But it does point to the increasing significance of third-party applications for the Sharepoint platform and how the service may evolve as cloud computing becomes more prevalent.

I was predicting this a few weeks ago on my “Things To Get Excited About in SharePoint 2010” post. Here’s what I had to say:

Service Application Architecture – the Shared Service Provider was a good idea but it was a bit hard to use in practice. Under the new architecture, you can create Service Applications for things like Excel Services, Forms Services, Business Connectivity Services, and other services that you build or buy, and you can mix and match these in your farms as you like. The services get consumed by web front ends via a standard interface.

This should allow a lot of plug-and-play customization of farms. I’m even wondering if there is an opportunity for vendors here…create some services and expose them to clients from the cloud.

There are some other big changes like Claims Based Authentication and Solution sandboxing which are intriguing to me. The Solution sandboxing feature gives me this sneaking suspicion we will one day soon see a Microsoft SharePoint App Store where we can buy, download and run SharePoint solutions in our farms.

Magic Eight-ball now says: “You may rely on it”.

Actions:

Wednesday, November 04, 2009

Hosting Clockwork Web Framework With Amazon

I’ve blogged a lot about my admiration for Amazon’s web services stack. I think they understand the web as well as any company in the world. It’s always been my intention to investigate Amazon’s Electronic Compute Cloud (EC2) and since I needed hosting for my new Clockwork Web Framework, I decided to give it a try.

The reason I went with Amazon rather than a traditional hoster is that I have no idea what kind of interest there will be in the framework, and therefore cannot predict what the load on a web server will be. Amazon EC2 is designed for this kind of flexibility, and you pay per hour.

The Platform

I am running a small Windows Server 2003 32x server instance to begin with. It only has 1.7 gb of RAM. I can scale this up if I need to, or more likely I will run up another small instance and load balancing the two using Amazon’s Elastic Load Balancer technology.

On this, I am using IIS 6, .NET 3.5, SQL Server 2005 Express, and Powershell. Most of my files are kept on a permanent storage drive (more on this below) and served by IIS. In order to maximize the speed and lower the CPU burden on the server, I have decided to use another Amazon technology, CloudFront.

CloudFront Content Delivery Network

CloudFront is a Content Delivery Network (CDN), like Akamai or Limelight. I use it to serve my images and resource files. Basically Amazon has edge servers all over the world with a copy of my images and resource files, and when users request them from my website, CloudFront automatically sends them a copy from the nearest location to them, making for some very fast download times.

To make this work, you have to use Amazon Simple Storage System, or S3. This is a virtual file system. Basically you have “buckets” of files that are served up when requests come in from the CloudFront “distributions”.

I’ve optimized it a bit by having two distributions; one for images and one for resources. This means that a page which requires both things will load even faster since two parallel CDN distributions are processing the files at the same time.

You can create CloudFront distributions through code, or through Amazon’s web management portal.

I notice the website loads really quickly, so the CloudFront makes a big difference.

EC2 Hosting Challenges

So that’s the high level architecture. There are a number of impacts when using Amazon as a hoster I’d like to talk about.

Server Goes Up, Server Goes Down

To begin with, you have to assume that at any moment your server will go down. If your server dies, it vanishes, and you have to “spin up” another one, using the web interface or code. It’s very easy to do from the web console, just click “Launch Instance” and you can pick any server ranging from Ubuntu Linux to Windows 2003 Server 64x Enterprise R2.

Although the server instances you can use have their own hard drive space on C: and D: drives, you have to treat that as transitory storage.

I’ve setup my system in such a way that I can use an Elastic Block Storage (EBS) hard drive volume, provided by Amazon.This is a more permanent drive space that you pay for, but can be attached to any server instance. Think of it as a SAN (that’s probably what it is).

So I’ve got my database and web files on this EBS block, which I then mount to any server instance I’m currently running.

On the server instance, I simply point IIS web server to the EBS block files, and away we go.

The EBS can be any size you like, and you pay per GB per month. Right now I’m using 10GB since my log files and database don’t take up much room. I can add more space later if I need to.

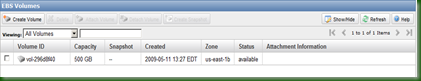

Here’s a screenshot of that EBS volume, in the Amazon web console.

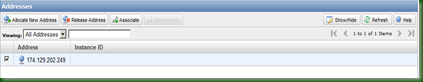

Dynamic DNS Entries

Next problem: Since the server can go down at any moment, DNS is a problem. If my server dies and I spin another one up, it will be given its own IP address, which my DNS entry for www.clockworkwf.com wouldn’t know about. So there might be a long delay while DNS changes to the new IP address.

So, I’m using a Dynamic DNS service called Nettica. They have a management console where I can enter my various domain records and assign a short Time To Live (TTL), which means the DNS entries update frequently. So if my server dies, I can change the entry in Nettica to point to the new server’s IP address, and within a few seconds requests are going back to the right place.

Nettica even allows me to control all of this through C# code. Going forward I plan to write powershell server management scripts that can automatically spin up a new server on Amazon, determine the IP, and register that with Nettica.

Incidentally, Amazon EC2 allows you to buy what are called “Static IP Addresses”. Essentially you can “rent” a fixed IP address which can by dynamically allocated to a server instance. So, in the short run this makes life easier for me as I have rented one, used that for my Nettica domain name record, and can assign this fixed IP to any new server instance.

Next problem: Disaster Recovery.

Disaster Recovery is even more important in Amazon EC2 world than elsewhere, since again your instances could die at any moment….Not that they will, but the point is, they are “virtual” and Amazon isn’t making any promises (unless you buy a Service Level Agreement from them).

However, Amazon’s EC2 provides a level of DR by its very nature – you can spin up another machine in a small amount of time. Estimates for new Windows instances are about 20 minutes.

There’s also something called an Availability Zone. Essentially it means “Data Centre” – Amazon has several of these and so you can spread your servers around between US – East, US-West, Europe, and so on. So when that Dinosaur-killing comet hits North America, the Europe Availability Zone keeps chugging.

Right now I’m not really doing much with my database, so DR isn’t such an issue. I have some security since my files are on an EBS block. However, eventually I’ll setup a second server in another availability zone and load balance the two.

Another Challenge: Price

Amazon Web Services are flexible, and you are charged per hour, for only what you use. This is an amazing model but it doesn’t work so well for website hosting, because of course your servers are supposed to be online 24/7, 365 days a year.

It’s hard to tell for sure what the annual bill will be, but for my small server instance (remember, only 1.7 Gb of RAM) it will cost well over $1,000. That’s a lot more than shared space on a regular hosting provider. However I’m willing to pay this, for the flexibility I get, and also because I think Amazon web services are a strategic advantage and so the earlier I learn about them, the more business opportunities I might unlock.

One good thing is that Amazon has been aggressively dropping its prices as it improves its services. Additionally, they have started offering “Registered Servers” – basically a pre-pay option for 1-year, 2-year, and 3-year terms. Unfortunately these are only for Linux servers at the moment but hopefully they will add them for Windows and then I can save money year on year.

CloudHost Monitoring

Amazon offers a web-based monitoring option for its server instances. I’ve started using it (for an additional fee) but I’m not sold on its utility yet. I don’t think I’m using it to its full potential yet – it is supposed to help you manage server issues by monitoring thresholds.

Managing S3 Files Using Cloudberry Explorer

I needed an easy way to create and manage my buckets, CloudFront distributions, and S3 files. I found Cloudberry Explorer, and downloaded the free version of it. I was able to drag and drop 1600 files from my Software Development Kit to the S3 bucket where I’m serving the resources. Super!

There’s a pro version I might purchase which would allow me to set the gzip encryption and other properties on the files. This would help lower my bandwidth costs and speed up the transfer a bit.

Here’s a screenshot of Cloudberry in action:

I love how easy it is to setup and use Amazon’s web services stack. I think they have a great business model for the Cloud, and they’re the company to beat. I’m willing to rely on them for the launch of Clockwork Web framework and so far I haven’t been disappointed.

Actions:

Monday, October 19, 2009

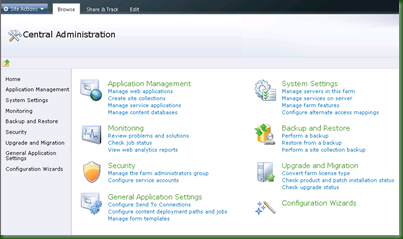

Central Administration in SharePoint 2010

Here’s a quick lap around the new Central Administration console in SharePoint 2010.

New Central Administration Layout

The navigation structure is broken down a little more than in 2007. There is no more “Operation” and “Application Management” divide; instead the new console is divided into the following sections:

- Application Management: Manage site collections, web applications, content databases, and the new service applications

- System Settings: Manage servers, features, solutions, and farm-wide settings

- Monitoring: Track, view and report the health and status of your SharePoint farms

- Backup and Restore: Performs backup or restores

- Security: Manage settings for users, policy, and global security

- Upgrade and Migration: Upgrade SharePoint, add licenses, enable Enterprise Features

- General Application Settings: Anything that doesn’t fit into one of the other sections

- Configuration Wizards: These are nice wizards to help setup or modify the farm

This is new layout is an advantage – the “Operations” and “Application Management” tabs in 2007 always felt a bit arbitrary and it wasn’t always clear which tasks went where.

Monitoring

This is quite useful – basically you can take the heartbeat of SharePoint and its services via reports, and view problems and solutions. Here’s a screenshot of the interface:

There are only a couple of reports right now, which tell you which pages loaded the slowest, and which users are the most active. I imagine for release there will be many more.

The problem and solution report is very helpful in identifying which services are failing on which servers, and why. Notice in in this report there is detailed information about one of the failing services, in this case Visio, and links to remedy it.

Surfacing common errors in this way will go a long way to reducing the IT administrative burden of SharePoint. I hope Microsoft is active in populating this report engine (or provides a way for the community to modify it).

Usage logging settings are in here as well.

Service Applications

Of course to manage this Microsoft has to surface the available services and their settings in the Central Admin. This screenshot gives an indication of just how many services can be used.

Export Sites and Lists

Now you can export site and list data right from SharePoint! It’s straightforward with the new Backup and Restore section, which allows full Farm Backups and Restores along with far more granular backup. The backup can include full security including site users, as well as version history information for each item in the list.

I doubt this will replace the need for 3rd party backup software but it’s another tool for IT Admins.

Here I am backing up a Calendar from a site to file.

The new service architecture of SharePoint is one of the most exciting things about it, and obviously required a bit of a Central Administration retooling. That provided an opportunity for some other quick wins, including a much more intuitive navigation structure and some neat monitoring tasks. More evidence that SharePoint 2010 is building on, but not replacing, the core strengths of 2007.

Actions:

Thursday, July 16, 2009

Data Splunking

I’ve had my head down for the last couple of months, churning out code for that elusive framework I keep hinting at :) Right now I’m staying in a trailer with no tv, internet, or cell phone coverage and I’ve never been more productive (says I).

Still, I thought I would pop up briefly to mention a cool IT tool that can provide you with a centralized, browser-based repository to search on all the millions of log files, event viewers, and databases that are inevitably scattered around any company’s data centres.

It’s called splunk. Its name is clever – users get to spelunk into their data silos and see what’s there. It’s a simple, single package install that runs on most desktop machines and servers. There’s a free version if you use less than 500 megs of indexed data, and enterprises can pay to index larger corpuses. I’m running that on my Vista 64 bit box and it indexes and searches like a little champ.

In my case I’ve been using it on my framework log files to help analyze bugs and performance bottlenecks. Here’s a screenshot of a search on the keyword “nhibernate” (NHibernate is an Object Relational Mapping software):

As you can see, it quickly pops up all the logged events where NHibernate was called from my classes.

To get this to work, all I had to do was add an “Input” for splunk to index – in this case the full path to my log file folder.

As you would expect, it does lots of reporting. It has broken down my log files into various columns. Examples of these columns are: custom C# properties I search on; the standard log file “stuff” such as the source name, date created, file size; even the sql commands that NHibernate generates for me. I can filter these columns for even more detailed breakdowns. In the next screenshot I am reporting on Entity ID values I use to track my framework objects.

I like splunk because it’s a one-stop shop for me to analyze all my various bits of IT Operations information. There’s a slick AJAX web user interface, and so far performance seems fine for me on my little dev laptop. I find it solid, intuitive, and I don’t have to expend much effort to install, manage, or learn it.

There’s also a way to extend splunk using its custom Application Programming Interface. I plan to investigate that when I have some free time but have not had a look yet.

I think any IT company should give splunk a test run.

Actions:

Wednesday, April 01, 2009

Ottawa SharePoint User Group – PerformancePoint

Yesterday’s Ottawa SharePoint User Group was a demonstration of Microsoft PerformancePoint, given by Microsoft Canada’s Olivier van Brandeghem. PerformancePoint is a Business Intelligence product that was built on top of SharePoint (MOSS Enterprise only).

Olivier began by explaining Microsoft’s strategy of making Business Intelligence available across the organization. He pointed out that the people who tend to see Key Performance Indicators and Dashboards are be the people who are least likely to act on them directly – so it is helpful to make these sorts of dashboards available as widely as possible. He argued that this form of Business Intelligence is collaborative or “democratized”.

In order to allow this, the technical complexities (of installing the BI product, managing it, producing OLAP cubes and other data sources, and making and deploying dashboards and reports) have to be reduced. This is a key goal of PerformancePoint, and Olivier therefore focused his demo on showing how easy it was to use.

As I mentioned, PerformancePoint was previously a standalone product. As of today, April 1 (International Conficker Day!), it can no longer be purchased separately – it is part of the SharePoint Enterprise Client Access License which means if you own Enterprise MOSS, you get PerformancePoint.

This is a huge win for clients who love the idea of dashboarding and BI but can’t afford even more software licensing in addition to their SharePoint fees. It also fits well into the Enterprise SharePoint space, which also provides basic KPIs, Excel Services, Forms Services, and the underappreciated Business Data Catalogue.

Additionally, Reporting Services can be bundled with SharePoint “natively”, so the Enterprise product fit is very good. PerformancePoint is also part of recent Microsoft moves from licensing by servers, to licensing by services. This is due to the Software+Services initiative I blogged about here.

So what exactly does PerformancePoint give you? Here’s a quick list:

- Scorecards

- Analytics

- Maps of business data

- Data Linked Images

- Search

- Advanced Filters

- Predictive KPIs (“You will come into some money”)

- Planning Data

One nice feature is the Central KPI management, where you can set the KPIs in one place and share them all over a portal.

Olivier also demonstrated how Visio diagrams can be connected to KPIs. The demonstration he showed was of a hospital, which was actually a very intuitive way of showing all these capabilities. The Visio diagram for instance was a map of hospital rooms showing infection rate, patient turnover, and other metrics, and the various rooms of the hospital turned red or yellow or green depending on the KPI result.

It seems easy for end users to create their own reports, using various templates. Olivier mentioned the use of MasterPages so there can be a level of consistency in the branding (Reporting Services, are you listening?).

Strategy Map scorecards are available. These are dynamic combinations of KPIs - almost like a workflow or flow chart - that give a more realistic flow of key business metrics. As an example, if you have some red KPIs at the beginning of a business process, your whole process might be flagged red or yellow; but if everything is alright except for a few optional business metrics that are red, your strategy map may still be Go Go Green.

PerformancePoint ships with some built-in web parts that allow ad-hoc KPI manipulation. Generally they provide Master-Detail views and some charting or rendering components such as pivot tables. Each allows export to Excel as you would expect, where you can drill down into even more detail or take the data offline. For more information on the native SharePoint Excel / BI offerings, check out this blog post from the Sydney User Group.

The Advanced Analytics tool called ProClarity also ships with PerformancePoint. Microsoft purchased these guys in 2006 with the goal of beefing up their Business Intelligence offering. ProClarity gives you open access to the OLAP cube to manipulate and report on data. Although the tool is separate in this version, in the next version it will be tightly integrated into the rest of the toolset.

PerformancePoint supports a variety of data sources, including Relational Databases, but the obvious source is an OLAP cube. In response to a question from the audience, Olivier stressed that the goal is not to require SQL Server Analytics, but any OLAP cube provider. Microsoft understands that companies that have made big investments in some other BI vendor, such as Cognos, won’t be willing to shift all their BI bits into another vendor, simply to get dashboarding. So their goal is simply to help surface the existing data into SharePoint.

Olivier also mentioned that Enterprise Project Management, the latest version of Microsoft Project Server, now uses OLAP cubes to help report on project metrics. Anybody using PerformancePoint and EPM therefore should spend just a bit of time putting these metrics to use.

One thing that startled me a bit was the licensing discussion. Olivier mentioned that you can mix Standard and Enterprise User CALs. To be honest this recommendation has tended to vary depending on who you talked to at Microsoft. Sometimes Microsoft representatives say no, everybody in the organization has to use Enterprise CALs if anybody does; other times the response is yes, you can mix them up as long as they are tracked somehow. An official FAQ seems to imply the latter. In any case with dedicated Site Collections it’s pretty easy to lock down functionality to a select few so this is achievable in SharePoint.

The tight integration of PerformancePoint with SharePoint is part of a growing trend I mentioned a couple of years ago. More and more products will end up on top of, or talking to, the SharePoint stack. This is the whole point of having a platform. We can expect much more evidence of this in the next release.

Actions:

Thursday, March 26, 2009

LDAP Authentication – More Tips

After posting about why LDAP authentication for intranets are a bad idea, I received some more emails with some tips I thought I would share.

Although most people commented that they would try to take the approach of synchronizing their e-Directory identity store to a slave Active Directory, a few were in the unfortunate position of having to implement LDAP anyway.

So if you fall into that camp, here are a few more approaches that might help you. As always your mileage might vary:

Wen He posted some great advice on modifying the People Picker for LDAP Membership providers. This is a pretty common problem – even if the profiles are importing successfully, the people picker may not show them. So here’s the quick code to do this:

you will need to add a key specifying the LDAP Membership “LDAPMember” to the <PeoplePickerWildcards> section into the web.config for the web application and Central Admin as shown:

<PeoplePickerWildcards>

<clear />

<add key="AspNetSqlMembershipProvider" value="%" />

<add key="LDAPMember" value="*" />

</PeoplePickerWildcards>

The line with the key="LDAPMember" and the value value="*" that explicitly specify a wildcard enables PeoplePicker to be able to search for People and Groups by enumerating users from LDAP directory. You know that if you don’t add this line, the PeoplePicker will look only for an exact match on the user ID.

Wen He goes on to describe user and group filtering against LDAP in SharePoint. It’s a great post, highly descriptive and comprehensive.

I also received an email from Krzysztof Wolski – after setting up LDAP and trying to log in he was having problems with “Unknown Error” (one of my all-time favourite exception messages ever!):

We have to use LDAP authentication because this

is an official requirement of our client.

I've solved the problem with "Unknown error" - I've changed the default user

for Application pool and set the identity information in web.config for 81

web application to :

<identity impersonate="true" userName="WIN2003\#USER#" password="#PASS#" />

We've used the same user for Application pool and impersonation.

Krzysztof also mentions one other potential gotcha that occurs if your e-Directory store is set to not allow anonymous authentication. He solved it this way:

We've successfully installed Sharepoint with LDAP Authentication.

One more thing we've added to the LdapMembershipProvider in web.config:

<membership>

<providers>

<add server="#IP#" port="389" useSSL="false" useDNAttribute="false"

userDNAttribute="cn" userNameAttribute="cn"

userContainer="o=MyCompany" userObjectClass="user"

userFilter="(&(ObjectClass=inetOrgPerson))" scope="Subtree"

otherRequiredUserAttributes="sn,givenName,cn"

name="LdapMembership"

type="Microsoft.Office.Server.Security.LDAPMembershipProvider,

Microsoft.Office.Server, Version=12.0.0.0, Culture=neutral,

PublicKeyToken=71E9BCE111E9429C"

connectionUsername="#USERNAME#"

connectionPassword="#PASSWORD#" />

</providers>

</membership>

eDirectory configuration in our case was configured to not allow anonymous

access.

Thanks Krzysztof for the great tips!

I hope this information helps those of you doing LDAP integration – but even more I hope you don’t have to do this in the first place :)

Actions:

Friday, February 20, 2009

Using LDAP Authentication With A SharePoint Intranet Is A Very Bad Idea

A couple of years ago I wrote an article explaining step-by-step how to integrate Novell e-Directory with SharePoint. At the time it was pretty much the only available information on the web. Since then I have frequently been asked for tips on integrating LDAP with SharePoint intranets, most recently last week. So I thought I would provide some updated advice:

Run away! Run far, far away.

- Get one of your colleagues to distract your boss.

- Climb out of your office window.

- Head for the nearest bus, train station, or airport.

- Change your name.

If somehow your boss or client finds you and demands that you integrate LDAP with the SharePoint intranet, explain why it's a very bad idea.

Why is it a Very Bad Idea?

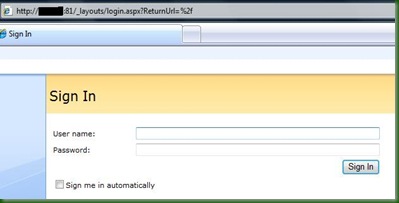

From a technical perspective LDAP integration is really just Forms Based Authentication (FBA) - you are passing in a username and password to SharePoint, and these happen to be authenticated via LDAP calls to an identity store somewhere.

Using LDAP, logging into your SharePoint portal will look like this:

Doing this is a big mistake!

Reason #1: Time Spent Tinkering

Now in order to do this, there are a variety of technical steps you need to take. If you run into problems anywhere along the way, you will spend your valuable time trying to figure out if the problem is in your Role and Membership provider settings, in your various web.configs, your LDAP query, or something else you have to enable in SharePoint.

This means you are spending your effort (and your client or employer's money) struggling to implement something that with Active Directory would "just work".

Some might argue that this isn't a great reason - after all plenty of time and effort goes into modifying SharePoint to accomplish other requirements. But any effort you expend making SharePoint work without AD is time you could be spending modifying SharePoint to address problems the business actually cares about. The business does not care that its credentials are currently stored in Novell eDirectory and SharePoint prefers them in AD.

Reason #2: Your SharePoint Intranet Won't Work The Way You Expect

Portal users expect seamless integration and functionality when they are using SharePoint for the intranet, because that's what all the marketing materials teach them to expect.

Building an intranet without Active Directory can lead to some surprising and annoying side effects. Out of the box web parts or controls like the Organizational Hierarchy don't work very well without Active Directory. You can make them

work with ADAM or by exploring 3rd party replacements but you'll have to test any portal functionality you think you are likely to use.

Also, the user experience with Microsoft Office integration can become a problem. Open up Word or Excel when logged in using LDAP credentials and you might see this:

It's fun explaining that to an end user!

SharePoint Designer is also tricky - it wants to automatically authenticate you using windows credentials, and throws errors when it runs into forms based authentication.

I'm not claiming that these issues are insurmountable, but collectively they introduce new bugs, development, testing, and management issues, increase cost and risk, and potentially annoy your users. Is it really worth it?

How Is that Different from Internet or Extranet Environments?

Many extranet and internet environments use Forms Based Authentication without problems. Administrators or installers have to modify SharePoint to work in these zones using Role and Membership providers...So why is it ok for those environments to use FBA but not the Intranet?

Again, it's a question of user expectations. When a user logs on to an extranet or internet site, they don't expect seamless Office integration and automatic access to file shares using their desktop credentials. When they use SharePoint intranets, they do expect these conveniences.

All the literature and sales material tells them that they should be able to interact directly with SharePoint intranets via Outlook, Word, and Excel - without annoying popup boxes and workarounds. They expect to be able to use all of the out of the box controls and web parts like the Organizational Hierarchy, and don't want to hear excuses like "that works best with Active Directory so we can't use that now".

So What Should You Do?

I recommend synchronizing your e-Directory identity information into an Active Directory domain and building SharePoint on top of that.

There are a variety of ways to do this but one way you can investigate is via Novell's IDM which can synchronize between e-Directory and Active Directory. Your e-Directory is still the master identity store, but any e-Directory changes get automatically sync' ed to the child Active Directory. Here's a link that might help: http://www.novell.com/coolsolutions/appnote/18349.html

You can definitely make SharePoint use multiple identity providers - but the reality is your SharePoint portal becomes much more flaky and expensive to manage. These days there are many ways to populate Active Directory from some other identity store - I always recommend that since it is less effort and risk down the road, and (probably) less cost as well.

SharePoint is complicated enough without adding to the challenges.

Of course your mileage might vary. If you have attempted (or succeeded) in integrating a SharePoint intranet with LDAP what were your experiences? Besides IDM, are there other good ways to synchronize Active Directory with a master identity store?

Actions:

Saturday, January 31, 2009

SharePoint Best Practices Conference 2009

Tomorrow I'm heading off to San Diego for the Best Practices Conference. I thought the last one was fantastic and I hope this will be just as good.

Last year's BPC had a great mix of people with all kinds of good tips and advice on SharePoint, ranging from really in-depth IT operations-style tricks to more end-user focused adoption and support techniques. There was a real buzz of enthusiasm for SharePoint that you don't often see at conferences.

This year Joel Oleson will be making the keynote and I'm looking forward to hearing his speech. As well, there are over 40 speakers (including many MVPs), 27 sponsors, and hundreds of attendees.

Last year I presented some ideas on how to centrally manage metadata in a farm. Once again I'll have a chance to speak on a similar topic. This time my presentation is called "Best Practices For Centrally Governing Your Portal and Taxonomy" - pretty straightforward title I know :) It'll expand on last year's work - I've got a demonstration of an actual governance site collection and ideas on how to manage things centrally including key SharePoint items like content types and lookup lists. I'll post the slides in various places after the event.

On a side note, I've been on the road a lot recently and my blog postings have been less frequent. With a bit of luck I'll be able to attend some sessions and blog on the speakers this week. Either way I hope to have some more "meaty" SharePoint posts in the near future. I don't want this blog to look like a travel diary!

Thanks for reading as always,

Nick

Actions: