Thursday, April 30, 2015

It's Alive: Tips and Tricks on Surviving Your Project Go-Live

Actions:

Sunday, April 19, 2015

"It's Alive!": My Upcoming Presentation at Ottawa SharePoint Meetup Group on April 30

Please join me at the Ottawa SharePoint Meetup Group's April 30th meetup, where I will be presenting "It's Alive!".

You've got to get a million small details right when launching a project, and the pressure is enormous. I will present a list of tips and tricks to assist you with project Go Live, whether you are just starting your project planning, or up against a scary deadline!

We meet at Patty Boland's pub at 101 Clarence in the Ottawa Byward Market where you can enjoy the presentation with good food, drink, and company! If you are planning to attend, please RSVP here so we can arrange enough seats.

Actions:

Wednesday, June 05, 2013

Choosing and Using Cloud Services with SharePoint

Here’s a copy of my presentation for the SharePoint Summit 2013 in Toronto. I spoke about tips and tricks for evaluating and managing cloud services with SharePoint, including some common gotchas and considerations.

Because it was such a wide-ranging topic I tried to anchor it with the story of StoneShare’s own journey to the cloud. I like to keep my presentations “real world”

I hope this is of value to someone – please feel free to contact me on LinkedIn if you have any questions about it.

Actions:

Wednesday, October 05, 2011

SharePoint Conference 2011: Integrating Social Media with SharePoint Websites

These are my rough notes from an SPC presentation by Brian Rodriguez and Ryan Sockalosky. Awesome session – my favourite type – really matter of fact and presenting and solving issues clearly.

Leveraging Social Media Example

Examples of leveraging social media on Facebook sites.

Ticketmaster on FaceBook: Daily Deals, large following, evangelism, popular items. Popularity leads to large revenue.

Front and center placement, put the like button as part of purchasing experience. You go to concerts with your friends and your friends are on facebook.

What can you do to leverage Social Networks?

Help users “Like", Tweet, and share content. Reach a broader audience. Allow your customers to evangelize for you. Drive traffic to your site.

How to Do it: Facebook

Facebook Plugins – developers.facebook.com has plugins you can use.

iFrame or XFBML (facebook XML language)

Some options: Like button, send messages, embed activity feed

plugin on page.

Facebook OpenGraph Protocol – you can use Facebook insights to get insights into traffic patterns, who is liking what

How to do it: Twitter

Embed twitter RSS feed into your page, embed plugin to allow tweets from a page.

How to Do It: Other Social Networks

AddThis – social network sharing

Demos

Contoso eletronics website

Add “Follow” button in master page at the bottom. Added tweet and like buttons next to the content. Defaulted Tweet content makes it dead easy for a user to click tweet and share the link

By function of tweeting that page, it is now part of the “Social Stream” – given a bit more weight in search engines and enabled for discovery by followers of the user or his or her friends.

Created a custom webpart to allow users to tailor the text to configure the Twitter content dynamically. The page owner can decide that they want to automatically embed hashtags in any tweet via a webpart property.

Facebook webpart – same options – defaulting text, show like button, show activity feed, people’s faces etc.

Tip: Make sure the tweet or facebook or sharethis links go to the home page consistently, so set some buttons in the master page footer or header and ensure every page on the site shows the sharing buttons.

One of the limitations of sandbox solutions is you cannot call RegisterClientScript so webparts containing these facebook or twitter plugins cannot be handled as sandbox solutions. Also a separate mode is created for Design Mode to allow rendering in SharePoint Designer otherwise you will see the controls as broken in Design Mode.

Demo of modifying master page by overriding AdditionalPageHead content placeholder in order to inject OpenGraph metadata tags (title, site name, description).

Enable Engagement and Conversations

Allow Social Commenting

Educate others that the conversation is happening

Increasing brand awareness and credibility by inviting the conversation to your site

Customers can engage with you and with each other

Facebook: Login plugin on your page and activity stream

Twitter: Widgets around searching or surfacing profile on your website

Demo of wrapping code in content editor webpart for these

Enabling Engagement and Commenting

Governance is key – to keep engagement clean and managed

Store and publish content on Social Sites: Examples: YouTube, Twitter, Facebook “fan pages”

Increased visibiltiy and re-use

Customers can view/subscribe/join without ever seeing your site

Surface your external social content on your site

1 out 6 minutes people are on a social networking site

Send to Twitter: Workflow in Action

Content added to SharePoint List – triggers approval SP workflow, results in REST post to Twitter. Need to store oAUTH credentials in SharePoint 2010 secure store service to allow staff members to sign in and post via the company account.

Content of tweet needs to be approved before posting

Register an application with Twitter to use their API.

REST Based api using OWA to allow approval

Setup oAuth sending consumer key and secret to Twitter using the Access Token they give us once signed up for the REST based api (while logged in as the company’s Twitter account).

Now go into Secure Store Service and add new Group access (single version of the credentials) although you could have different Twitter profiles and those could be individual secure tokens. Define the attributes we are capturing (Screen Name, Token, TokenSecret, ConsumerKey, ConsumerSecret).

Demo of creating automatic tweet via workflow that includes page title (for a press release) and then a link back to the page automatically

Other Integration Scenarios

Leverage FAST Search for dynamic content with the FB Graph API

Connect BCS to Twitter – use native UI and WebParts with twitter content

Federated Search of Twitter, YouTube etc

Use federated login to Live or Facebook – use native SP Claims Based Auth instead of using the FB Connect plugin. Allows for audience targeting or storing a rich SP user profile

Allows for back end LOB integration (ex CRM, SAP, etc)

Tips and Considerations

Do you need to own the conversation / content?

SharePoint’s Social Networking capabilities require login

Linking is tied to pages / uRLs – if pages change or are deleted conversation goes away

Performance of plugins – Facebook like button is at least 2 calls to Facebook. You could try to load JS asynchronously after pages load to keep down load.

Reference javascript in master pages or page layouts

Actions:

Tuesday, October 04, 2011

SharePoint Conference 2011: Developing SharePoint Applications with HTML5 and JQuery

These are my (very) rough notes of a session by Ted Pattison at the SharePoint 2011 Conference in Anaheim.

It was a great session – Ted went into great detail in a short amount of time – and it was very entertaining.

Agenda

- Using JQuery with SP 2010

- HTML5 Fundamentals

- Leveraging HTML5 Features in SP2010

- Adding support for IE8 and IE7

JQuery Fundamentals

JQuery was designed to hide differences between browsers.

Design focuses on 2 primary tasks:

- Retrieving sets of elements from HTML pages.

- Performing operations on the elements within a set

Linking to the JQuery library - link to Microsoft CDN. Or you can add JQuery source to SharePoint environment in _layouts directory or via content DB in site collection. Adding to _layouts is not friendly to sandbox of Office 365

Tip: Use <SharePointScriptLink tag in the content layout

Tip: Create a feature to deploy the library. Deploy as Visual studio solution and deploy the wsp file which contains the JQuery script files and uploads them as a module.

Configure IntelliSense for JQuery

Copy JQuery source files to folder on local machine

Need a way for JQuery code to fire at the right time (when the DOM is available).

DocumentReady Event Handler

JQuery Objects

JQuery object represents a collection of zero or more elements referred to as a “Wrapped Set”. Example:

$(“p”).css({“color”: “#333” });

Most objects are created to cascade (i.e. do a bunch of things at once)

JQuery leverages familiar CSS selector syntax

Demonstrated using Browser debugging tool (which can be used in Office 365) to create new HTML tags dynamically using the DOM

JQuery UI Widgets

Pre-coded UI components based on built-in theming scheme – an extension to the core JQuery library

JQuery UI Widgets:

- Auto-complete

- Date Picker

- Slider, Progress bar

- Tabs

- Accordion

- Dialog

Download themes – which have CSS files you can use to configure the colours and look and feel

Working with Data

JQuery Templates: An additional extension – this is in BETA.

Templating mechanism for replacing XSLT transforms

Provides strategy for converting data collections into HTML

Demo: Creating an HTML Table with JQuery templates and making AJAX Calls with jQuery

Makes it possible to call REST based services

_vti_bin/listdata.svc for any SharePoint list returns a feed in XML format

$.getJSON(requestUrl, null, OnDataReturned);

You can POST using JSON to the SharePoint _vti_bin/listdata.svc list

Edits- have to view the DOM’s etag to see if another user hasn’t updated the SharePoint list data in the meantime. Use If-Match and pass in a MERGE method

HTML5

SharePoint uses XHTML 1.0 and CSS2.1

HTML 5 allows page elements to degrade gracefully. Adds JavaScript APIs and some new properties

Motivations to move: Want CSS, JavaScript and HTML to work well across all browsers. Want to target mobile devices.

Primary Pain Points: Modern browsers only support portions of HTML. IE does not offer HTML5 tag support until IE8.

New functional elements such as canvas, geolocation, video and data list

CSS3 Changes

Borders can have rounded corners. Colors can be expressed with gradients and opacity. Text can have drop shadows and more control over text wrap. Partial adoption of new properties has been going on for years.

New JavaScript 5 APIs

Not universally supported

Demo of creating a master page supporting HTML 5 – add document type and some new tags.

You still need to keep some special named HTML divs for SharePoint 2010 (for the Ribbon)

Creating an HTML 5 Site

Demo: Creating pages using new HTML 5 features: use the canvas, use SVG graphics

Videos: tip – include multiple sources for video formats because for the moment browsers support different formats

Geolocation: Can get navigator.CurrentPosition to figure out where you are and load a map using JavaScript information

Browser Support Fallback

IE8 and 7 still make up significant amount of user base

Polyfills is a way of providing fallback functionality for older browsers. Supporting older browsers begins with modernizer – Modernizr open source project that helps with this.

Some things you do in HTML5 will not work in IE 7 or 8 no matter what you do.

Added ScriptLink to the Modernizr script. Modernizr allows you to specify via CSS and JavaScript what will happen if the browser does not support one of the items (such as the new Canvas tag).

Actions:

Tuesday, April 26, 2011

SEO Tool for SharePoint 2010 WCM Sites

There is a great tool from Mavention called Meta Fields that allows SharePoint 2010 administrators to add metadata properties to the source code of their pages. This makes it easier for a search engine to crawl and rank the content and so is helpful for Search Engine Optimization (SEO).

To use this you just have to download (for free as far as I can tell) and install Mavention Meta Fields which is a SharePoint solution. After activating the feature and adding metadata columns to your page content type, you can now edit the properties and the source code spits out metadata such as (for example) keywords, subject, DC.title and DC.keywords.

Anyone with a public website SharePoint 2010 web site should consider using this. Complete instructions are on creator Waldek Mastykarz’s blog:

http://blog.mastykarz.nl/easy-editing-meta-tags-publishing-pages-mavention-meta-fields/

Great solution Waldek!

Actions:

Friday, June 18, 2010

How To: In Place Record Views in SharePoint 2010

One of the great new features in SharePoint 2010 is the In Place Record declaration. This allows a user to apply Records management to any file in a document library.

To enable this feature, you must first activate the In Place Records feature in your site collection. Next, for a given library, go to its settings and choose “Record Declaration Settings”. Choose “Always allow the manual declaration of records”.

This will allow the Declare Record button to appear in the ribbon.

Now that you have a collection of records and documents in the library, you will probably wish to sort them using views. I like to create two extra views: Active (which means all active documents or “non-records” and All Records (which means anything declared as a record).

It is easy to create the views once you know the steps. The following steps come from my colleague Hoking:

To make the views, you need to first enable in-place records management in the library settings. Then you need to actually declare 1 document as a record manually - this is in order to get the "Declared Record" field visible in the create/modify view pages. For the Active view, filter on Declared Record equal to [blank], as in don't enter a value for the field. For the All Records view, set the filter on Declared Record not equal to [blank].

Hope that helps!

Actions:

Wednesday, November 04, 2009

Hosting Clockwork Web Framework With Amazon

I’ve blogged a lot about my admiration for Amazon’s web services stack. I think they understand the web as well as any company in the world. It’s always been my intention to investigate Amazon’s Electronic Compute Cloud (EC2) and since I needed hosting for my new Clockwork Web Framework, I decided to give it a try.

The reason I went with Amazon rather than a traditional hoster is that I have no idea what kind of interest there will be in the framework, and therefore cannot predict what the load on a web server will be. Amazon EC2 is designed for this kind of flexibility, and you pay per hour.

The Platform

I am running a small Windows Server 2003 32x server instance to begin with. It only has 1.7 gb of RAM. I can scale this up if I need to, or more likely I will run up another small instance and load balancing the two using Amazon’s Elastic Load Balancer technology.

On this, I am using IIS 6, .NET 3.5, SQL Server 2005 Express, and Powershell. Most of my files are kept on a permanent storage drive (more on this below) and served by IIS. In order to maximize the speed and lower the CPU burden on the server, I have decided to use another Amazon technology, CloudFront.

CloudFront Content Delivery Network

CloudFront is a Content Delivery Network (CDN), like Akamai or Limelight. I use it to serve my images and resource files. Basically Amazon has edge servers all over the world with a copy of my images and resource files, and when users request them from my website, CloudFront automatically sends them a copy from the nearest location to them, making for some very fast download times.

To make this work, you have to use Amazon Simple Storage System, or S3. This is a virtual file system. Basically you have “buckets” of files that are served up when requests come in from the CloudFront “distributions”.

I’ve optimized it a bit by having two distributions; one for images and one for resources. This means that a page which requires both things will load even faster since two parallel CDN distributions are processing the files at the same time.

You can create CloudFront distributions through code, or through Amazon’s web management portal.

I notice the website loads really quickly, so the CloudFront makes a big difference.

EC2 Hosting Challenges

So that’s the high level architecture. There are a number of impacts when using Amazon as a hoster I’d like to talk about.

Server Goes Up, Server Goes Down

To begin with, you have to assume that at any moment your server will go down. If your server dies, it vanishes, and you have to “spin up” another one, using the web interface or code. It’s very easy to do from the web console, just click “Launch Instance” and you can pick any server ranging from Ubuntu Linux to Windows 2003 Server 64x Enterprise R2.

Although the server instances you can use have their own hard drive space on C: and D: drives, you have to treat that as transitory storage.

I’ve setup my system in such a way that I can use an Elastic Block Storage (EBS) hard drive volume, provided by Amazon.This is a more permanent drive space that you pay for, but can be attached to any server instance. Think of it as a SAN (that’s probably what it is).

So I’ve got my database and web files on this EBS block, which I then mount to any server instance I’m currently running.

On the server instance, I simply point IIS web server to the EBS block files, and away we go.

The EBS can be any size you like, and you pay per GB per month. Right now I’m using 10GB since my log files and database don’t take up much room. I can add more space later if I need to.

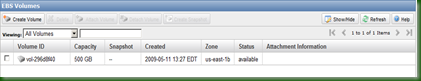

Here’s a screenshot of that EBS volume, in the Amazon web console.

Dynamic DNS Entries

Next problem: Since the server can go down at any moment, DNS is a problem. If my server dies and I spin another one up, it will be given its own IP address, which my DNS entry for www.clockworkwf.com wouldn’t know about. So there might be a long delay while DNS changes to the new IP address.

So, I’m using a Dynamic DNS service called Nettica. They have a management console where I can enter my various domain records and assign a short Time To Live (TTL), which means the DNS entries update frequently. So if my server dies, I can change the entry in Nettica to point to the new server’s IP address, and within a few seconds requests are going back to the right place.

Nettica even allows me to control all of this through C# code. Going forward I plan to write powershell server management scripts that can automatically spin up a new server on Amazon, determine the IP, and register that with Nettica.

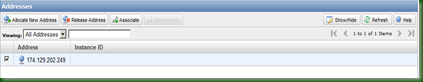

Incidentally, Amazon EC2 allows you to buy what are called “Static IP Addresses”. Essentially you can “rent” a fixed IP address which can by dynamically allocated to a server instance. So, in the short run this makes life easier for me as I have rented one, used that for my Nettica domain name record, and can assign this fixed IP to any new server instance.

Next problem: Disaster Recovery.

Disaster Recovery is even more important in Amazon EC2 world than elsewhere, since again your instances could die at any moment….Not that they will, but the point is, they are “virtual” and Amazon isn’t making any promises (unless you buy a Service Level Agreement from them).

However, Amazon’s EC2 provides a level of DR by its very nature – you can spin up another machine in a small amount of time. Estimates for new Windows instances are about 20 minutes.

There’s also something called an Availability Zone. Essentially it means “Data Centre” – Amazon has several of these and so you can spread your servers around between US – East, US-West, Europe, and so on. So when that Dinosaur-killing comet hits North America, the Europe Availability Zone keeps chugging.

Right now I’m not really doing much with my database, so DR isn’t such an issue. I have some security since my files are on an EBS block. However, eventually I’ll setup a second server in another availability zone and load balance the two.

Another Challenge: Price

Amazon Web Services are flexible, and you are charged per hour, for only what you use. This is an amazing model but it doesn’t work so well for website hosting, because of course your servers are supposed to be online 24/7, 365 days a year.

It’s hard to tell for sure what the annual bill will be, but for my small server instance (remember, only 1.7 Gb of RAM) it will cost well over $1,000. That’s a lot more than shared space on a regular hosting provider. However I’m willing to pay this, for the flexibility I get, and also because I think Amazon web services are a strategic advantage and so the earlier I learn about them, the more business opportunities I might unlock.

One good thing is that Amazon has been aggressively dropping its prices as it improves its services. Additionally, they have started offering “Registered Servers” – basically a pre-pay option for 1-year, 2-year, and 3-year terms. Unfortunately these are only for Linux servers at the moment but hopefully they will add them for Windows and then I can save money year on year.

CloudHost Monitoring

Amazon offers a web-based monitoring option for its server instances. I’ve started using it (for an additional fee) but I’m not sold on its utility yet. I don’t think I’m using it to its full potential yet – it is supposed to help you manage server issues by monitoring thresholds.

Managing S3 Files Using Cloudberry Explorer

I needed an easy way to create and manage my buckets, CloudFront distributions, and S3 files. I found Cloudberry Explorer, and downloaded the free version of it. I was able to drag and drop 1600 files from my Software Development Kit to the S3 bucket where I’m serving the resources. Super!

There’s a pro version I might purchase which would allow me to set the gzip encryption and other properties on the files. This would help lower my bandwidth costs and speed up the transfer a bit.

Here’s a screenshot of Cloudberry in action:

I love how easy it is to setup and use Amazon’s web services stack. I think they have a great business model for the Cloud, and they’re the company to beat. I’m willing to rely on them for the launch of Clockwork Web framework and so far I haven’t been disappointed.

Actions:

Sunday, November 01, 2009

Introducing Clockwork Web Framework for .NET

In 2003, I read a book, “Making Space Happen”, by Paula Berinstein. It’s about the efforts of entrepreneurs to open up space to the public. It’s the kind of thing that gets my propeller-head spinning, and after reading it I resolved to create the best website on space travel on the internet.

So, I sat down in a park and within two hours I had covered several sheets of paper with scribbles and scrawls of what my website needed. I had notes on authentication, web components, search boxes, themes, dynamic images, language toggles, and all kinds of stuff.

Being a good little programmer, the more I designed, the more intricate the design became, and pretty soon I was knee-deep in code. Flash forward six years later, and I have yet to write a single page of that space website!

But I do have a web framework :)

What It Is

Clockwork makes it easy to build powerful .NET web sites. It’s completely free, open source (under the Apache 2 license) and you can use it in proprietary or open source projects, as you like.

Some of the ways it makes web development easy:

- Database-agnostic data access

- Dynamically displays content in different languages

- Leverages the .NET 3.5 framework, including the Provider Model, generics, LINQ, automatic properties, and more

- Integrates with popular web services such as those provided by UserVoice, LinkedIn, Google and Yahoo!

- Makes it really easy to use object-oriented programming standards like Dependency Injection / Inversion of Control, Repositories, and Specifications

Under the hood I use many popular components, including NHibernate for database access, Castle Windsor for Dependency Injection, and log4Net for logging.

Although today marks the official public release, the framework is currently at version 3.x because I’ve been using earlier versions of it in production websites since 2004.

I’ve built Clockwork using as many web standards as I can find, as many of the latest .NET elements as possible, software best practices, and a lot of love and stubbornness.

What It Will Become

Well, it’s obviously too early to say. But I am committed to continuing to develop it, I have a long list of things I plan to add, and I’m hopeful a community of .NET developers will adopt it and push it into areas I can’t even imagine today.

Please take a minute to visit the website and learn more about it. I hope you find it helpful.

Many thanks,

Nick

Actions:

Monday, October 12, 2009

SharePoint: A Product and a Platform

SetFocus just published another of my articles for their Technical Articles section. This one is called “SharePoint: A Product and a Platform”, and discusses the implications of SharePoint as a software platform.

My conclusions are that the platform provides significant capabilities including a unified development environment, reduced maintenance, development, support, and training costs, and may increase the risk of vendor lock-in.

I’ve written for SetFocus before because I have a long association with them, dating back a decade. I had my Java certification training and first job placement through them. For the past year I’ve been developing and teaching parts of their SharePoint programming classes for the SharePoint Master’s Program (I’m instructing evening classes again starting this Saturday).

You can read more at http://www.setfocus.com/TechnicalArticles/Articles/sharepointproductandplatform.aspx. I hope you enjoy it and welcome your feedback!

P.S. The article is licensed under the Creative Commons Attribution-Share Alike 3.0 Unported License which means you can modify it and share it around!

Actions:

Wednesday, August 19, 2009

NHibernate Performance Profiling with NHProf

NHibernate

I’ve been using NHibernate a lot recently. It’s an Object Relational Mapping software that makes it easy to “map” between SQL database syntax and standard C# object models. The goal is to talk to databases, by writing code like this:

var query = session.CreateQuery("from WebPage p where p.VirtualPath like :path")

.SetString("path", "%pages%")

IList<WebPage> list = query.List<WebPage>();

Now behind the scenes, there’s a relational database somewhere – and transactions, and validation, and syntax parsing, and query analyzing, and all of that standard relational database stuff – but as a programmer I just need to know about my object model and it will return me a list of WebPage objects and I can easily use them in my code, update them, and delete them. Shiny!

NHibernate is a straight port of Hibernate, from the Java world where it originally evolved many years ago. So the concepts behind it have been field-tested for in both Java and (now) .NET shops. This makes it a very robust ORM tool. Did I mention it is completely FREE?

While it’s amazing software, it comes with a big learning curve. There isn’t much documentation out there – and most of it is on blogs and wikis. I’ve bought Manning’s NHibernate In Action and that helps a bit. However, there isn’t much information on common performance and configuration traps.

Learning and Analyzing With NHProf

So I was glad to find out about NHProf, a profiling and analyzing tool for NHibernate created by one of NHibernate’s main developers (Oren Eini aka Ayende Rahien). His colleagues are Christopher Bennage and Rob Eisenberg.

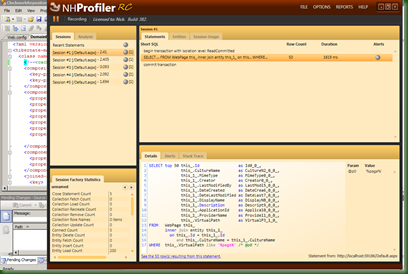

Essentially the profiler is a slick-looking Windows Presentation Foundation executable that “records” your application as it writes statistical data to the NHibernate log file, then provides a graphical view of the various things that are going on under the hood.

The interface is well thought out, with only a few tabs and windows, so the information is easy to sort through. Here’s a screenshot of the main interface:

What I Like About It

Now to the things I really like about this software:

First, you can see the exact SQL query that NHibernate is generating. Straightforward, but critical. There is a related Stack Trace which allows you to jump to the part of your code where you executed this statement.

As well, you can view the rows that are returned by any query. This makes it easy to see exactly what data you are getting back – a much-needed sanity check at times :)

Each NHibernate action is evaluated against known best practices (or bad practices) and you get “Alerts” that can provide more information on what to do (or not do).

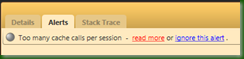

For example, while running some recent queries, I received the following alert: “Too many cache calls per session”.

This leads me to the final element that I LOVE – the “read more” and “NHibernate Guidance” features. Software is so complicated that I just want to get it working most of the time – but I know that if I really understood it, I would avoid a lot of bugs and future issues.

So what makes this software shine for me is the care that has gone into helping people learn NHibernate. By clicking “read more” you go straight to a web page that teaches you about that particular error and ways to avoid it – including code samples!

As well, there is a “Guidance” option that you can always access to learn about general NHibernate performance issues such as “Select N+1” or “Unbounded Result Set”. I’ve already applied the lessons from “Unbounded Result Set” and “Do Not Use Implicit Transactions” to my code and the result is much better performance and stability.

One thing I would like is the ability to hover over an alert in the statement in the main window, and actually see a tooltip of the alert message. At the moment you can see the icon showing the alert, but then have to click on the statement and then click on the “Alerts” tab at the bottom to see what it’s for.

NHProf is still in final beta but I have been using it for about a month and have found it to be very stable. I just bought my copy – there is a discount right now before it hits RTM and I think it has already been worth the money.

I would recommend this to anybody using NHibernate.

Actions:

Thursday, July 16, 2009

Data Splunking

I’ve had my head down for the last couple of months, churning out code for that elusive framework I keep hinting at :) Right now I’m staying in a trailer with no tv, internet, or cell phone coverage and I’ve never been more productive (says I).

Still, I thought I would pop up briefly to mention a cool IT tool that can provide you with a centralized, browser-based repository to search on all the millions of log files, event viewers, and databases that are inevitably scattered around any company’s data centres.

It’s called splunk. Its name is clever – users get to spelunk into their data silos and see what’s there. It’s a simple, single package install that runs on most desktop machines and servers. There’s a free version if you use less than 500 megs of indexed data, and enterprises can pay to index larger corpuses. I’m running that on my Vista 64 bit box and it indexes and searches like a little champ.

In my case I’ve been using it on my framework log files to help analyze bugs and performance bottlenecks. Here’s a screenshot of a search on the keyword “nhibernate” (NHibernate is an Object Relational Mapping software):

As you can see, it quickly pops up all the logged events where NHibernate was called from my classes.

To get this to work, all I had to do was add an “Input” for splunk to index – in this case the full path to my log file folder.

As you would expect, it does lots of reporting. It has broken down my log files into various columns. Examples of these columns are: custom C# properties I search on; the standard log file “stuff” such as the source name, date created, file size; even the sql commands that NHibernate generates for me. I can filter these columns for even more detailed breakdowns. In the next screenshot I am reporting on Entity ID values I use to track my framework objects.

I like splunk because it’s a one-stop shop for me to analyze all my various bits of IT Operations information. There’s a slick AJAX web user interface, and so far performance seems fine for me on my little dev laptop. I find it solid, intuitive, and I don’t have to expend much effort to install, manage, or learn it.

There’s also a way to extend splunk using its custom Application Programming Interface. I plan to investigate that when I have some free time but have not had a look yet.

I think any IT company should give splunk a test run.

Actions:

Tuesday, May 19, 2009

WCF WTF

Today I was working on a WCF application integration layer for my framework. For some bizarre reason, no matter how many times I updated the service contract and rebuilt my client service references, I never got the latest version.

Specifically, I was trying to call the following method:

public void CreatePages(IList<WebPageDTO> pagesToCreate)

The WebPageDTO was declared (temporarily) in the WCF project. I put DataContract and DataMember on it, the project built fine, and I could even add a reference to it from a client application. However, I could never update the reference from my clients, and when trying to view the service directly I would get this error:

The type '[namespace].[servicename]', provided as the Service attribute value in the ServiceHost directive could not be found.

Finally, I had to modify the method signature to RETURN WebPageDTO – which I didn’t want to do. However, that fixed the service reference and now I can update it. Now I have switched the method stub back to return a custom ServiceResponse object, and that also works.

Bit of a head scratcher. I don’t fully understand why this is happening. Anyone know?

Actions:

Monday, April 13, 2009

Griffon Solutions - Startup Diary

About four years ago my partner Marie-Claude and I started developing some custom ASP.NET websites for small businesses.

The company name - Griffon Solutions - comes from a lovely little village in the Gaspesie region of Eastern Quebec called l'Anse-au-Griffon.

What began as a part-time effort on evenings and weekends slowly started to get a little more organized over time. Two years ago, before we moved to Australia, we registered the company in Quebec.

Our reason for coming back from the Lucky Country last year was to focus more on this company, with the eventual goal of having a fun and challenging full-time web solutions business (software and consulting services) that we could run from anywhere.

We'd love to have an "open source business" - not just by writing Open Source software but by transparently sharing what we're up to, and how we're going about it, and learning as much as possible from the web community as we go.

Website Redesign

We’ve just launched a redesign of our website, www.griffonsolutions.com. We had a couple of pages online before, but the site was put together very very quickly as a placeholder, and needed a lot more love. In fact I’ve always been reluctant to publicize it :) As the old saying goes, “the cobbler’s children run barefoot”, and we never made the time to fix up the site, until now.

As a result of this SharePoint blog, I was contacted by Mario Hernandez, at Designs Drive. Mario has been looking into SharePoint and wanted to know a bit more about the LDAP integration I’ve written about. After a few email conversations he was kind enough to volunteer some of his time to help us redesign our website.

Marie-Claude and I picked a graphic design template we were happy with, and then Mario worked hard to make sure it was transformed into valid Xhtml and CSS. We all felt it was important to adhere to web standards and avoid table layouts if at all possible. We’re very happy with the end result.

Thanks so much, Mario, for contributing your time and your knowledge to us!

I think this is an interesting trend, especially in tough economic times…Here we have a couple of guys working in Los Angeles and Quebec who have never met, trading their IT skills and time to each other online. I know we’re not the first ever to do this, but it shows how small the world is, and how many opportunities there are for people to collaborate and work together.

The Framework

Incidentally, our new website is built on a custom .NET web application framework I’ve been working on constantly for the last 5 years.

The framework has been very much a labour of love. Sometimes it has been very frustrating and dispiriting, while at (most) other times the work has been fascinating, challenging, and deeply educational.

I’m finishing up the architecture on it and aiming to release it this Autumn as supported Open Source (probably Apache 2). This means that other people can use it (even in proprietary software).

In plain English, the platform has two goals:

- Provide a foundation of Enterprise-level capabilities to any .NET application.

- Integrate with popular software, databases, and web services in a simple, stable, secure, and flexible way

It is standards-based and fully multilingual right out of the box. It uses NHibernate so it can support most databases without any modification.

From a technical perspective I’m still nailing down the shipping features, but currently it includes all of the following:

- C# 3.0 / .NET 3.5 Framework

- Generic business Entities to model common web software concepts such as users, websites, documents, and web pages. These are implemented via interfaces so you should be able to integrate them with your existing code without much modification

- Common metadata and provider information for all Entities, such as Creator, Date Last Modified, and Data Source

- Basic LINQ querying for all Entities

- Entities can be exposed via a variety of formats including JSON and XML, or through web services (such as REST and SOAP) or web feeds (RSS and Atom)

- Full multilingualism down to the level of an individual piece of data

- N-tier codebase, using object oriented best practices

- NHibernate database-agnostic data storage

- log4Net for robust logging

- Application Integration layer to make it easier to consume and provide information from a wide variety of services, software, and other sources (such as RSS and Atom feeds)

- OAuth authentication for authenticating to web services such as YouTube or Google

- Some strongly typed web service managers and web controls to make it easy to use popular services like LinkedIn, Yahoo FireEagle, and Google

- Strongly-typed, standardized file access to a variety of file storage sources including Amazon S3, Http web servers, FTP servers, and Windows file systems

- Uses the .NET Provider Model (especially for Roles and Membership)

- Presentation layer with prebuilt base controls, pages, and master pages, as well as some server controls

- Includes basic website project with Robots.txt, Master Pages, sitemap, XRDS file, and Admin area to make it easy to start up and manage a new website

I’ve been using various versions of this framework in production since 2003 / 2004 so it is tested and stable (my current internal release number is 2.7), but it’s nowhere near where I eventually hope it can be, which is why I’m hoping to build a thriving community around it.

Right now I’m in talks with a group that wants to evaluate using it to integrate their software with a popular web messenging service. If you too would like to evaluate the framework around the Q3/ Q4 period, drop me an email (address below) and we can have a chat.

I’ll provide more updates on this framework during the summer, as it nears RTM.

The Future

Obviously there’s a lot going on. In the future, this blog will speak not just about SharePoint and related technologies, but about business and technology issues in general. I hope to learn as much as I share, and I hope above all that the blog remains interesting and that you enjoy reading it :)

Cheers,

Nick

P.S. You can always email me at nick@griffonsolutions.com.

Actions:

Tuesday, February 17, 2009

Above the Clouds: A Berkeley View of Cloud Computing

The Electrical Engineering and Computer Sciences department at the University of California at Berkeley just put out a research paper on Cloud Computing as they see it.

The paper is an in-depth exploration of what some consider to be just another buzzword, Cloud Computing. Since nobody has agreed on what exactly it means, the implication is that it's just a marketing term.

I remember when web services started to appear, around 2000/2001 if I recall correctly. The descriptions and possibilities seemed great, but nobody really knew what to do with them or why. So there came a time when nobody talked about web services anymore and it looked like that particular bubble had burst.

In fact, behind the scenes, a host of companies and individuals were figuring web services out, building their own, and releasing them. A couple of years after the term started popping up, web services arrived for real and now we have mashups and SaaS and Software + Services and some really well-traveled XML fragments zipping around the globe.

The same thing seems to be going on with Cloud Computing. We're in the early days, and still hearing the "Moon on a stick" promises that Cloud Computing is a silver bullet for everything.

This white paper is one of the first I've seen that really quantifies the (potential) cost savings of Cloud Computing.

Some gems:

- Explanations on Cloud Computing and how it differs from previous attempts;

- Classes of Utility Computing on page 10, comparing Google AppEngine, Amazon Web Services, and Microsoft's beta Azure platform;

- Cloud Computing economic models, on page 12;

- A discussion of the Top 10 challenges- and potential solutions to them - on page 16;

- The observation that FedExing your data is a good way to cut down on your bandwidth costs and delays.

This is very impressive work. The full paper is here:

http://www.eecs.berkeley.edu/Pubs/TechRpts/2009/EECS-2009-28.pdf

Actions:

Monday, February 09, 2009

SharePoint Best Practices - Another Great Year

We enjoyed another great year of Mindsharp's SharePoint Best Practices Conference in San Diego. Thanks to Mark Elgersma, Ben Curry, and Bill English as the chief organizers, although I know there are lots of other Mindsharp folks who worked hard to pull this off.

UPDATE: Just heard back from Ben - the primary conference organizers were Bill English, Paul Stork, Paul Schaeflein, Todd Bleeker, Steve Buchannan, Pamela James, Brian Alderman, Ben Curry, and Mark Elgersma. Thanks again guys!

Given the grim economy it was noticeable how many people showed up - over 350 attendees I believe in addition to all the vendors and organizers.

I attended with echoTechnology's Director of Sales, Sean O'Reilly. He's a real hoot - a bit of a legend on the conference circuit. We arrived on the Sunday. Since Sean and I are now using demo laptops and have the exhibit booth process nailed down after numerous conferences, it only took us about 15 minutes to setup the booth before we could unwind.

That night the BPC kicked off unofficially with a Super Bowl party in Ben Curry's suite. It was great to watch the game with various attendees and exhibitors.

Joel Oleson gave a funny keynote on Monday morning, talking about the 10 steps to success. He argued that SharePoint is plastic and so just because you can do something with it, doesn't mean you should. Some of his analogies included Robot Barbie and headless chickens. Also there was a disapproving mother ("IT") and finger-painting baby who got paint all over the wall ("the business").

One quote he mentioned from Gartner says that by 2010 less than 35% of WSS sites will put effective governance in place!

Programming Best Practices

In between exhibit hours and meetings, I was able to attend only one seminar, given by Francis Cheung of Microsoft's Patterns and Practices Group. This was a really neat explanation of best practices for programming against SharePoint.

What was interesting was Francis showed how object oriented patterns like the Repository pattern could be used to abstract out SharePoint-specific resources like list names, loggers, and so on. Francis also pointed out the need to create strongly typed business entities for SharePoint, rather than straight calls against SP objects.

This provides an additional layer of abstraction that allows mocking and unit testing. The idea is that for code testing purposes you should be able to swap in mock interfaces without relying on SharePoint being available. For instance you should be able to run unit tests against SharePoint code without the SQL database even being available.

These entities also make it easier to work with presenters/controllers without worrying about looking up Site or List GUIDs, or provide an easy way to do CRUD operations.

Microsoft currently has a patterns and practices guidance available at www.microsoft.com/spg. From the guidance doc:

This guidance discusses the following:

- Architectural decisions about patterns, feature factoring, and packaging.

- Design tradeoffs for common decisions many developers encounter, such as when to use SharePoint lists or a database to store information.

- Implementation examples that are demonstrated in the Training Management application and in the QuickStarts.

- How to design for testability, create unit tests, and run continuous integration.

- How to set up different environments including the development, build, test, staging, and production environments.

- How to manage the application life cycle through development, test, deployment, and upgrading.

- Team-based intranet application development.

This approach is actually a standard approach to custom code development but SharePoint has tended to blur the lines a little bit. What is very interesting is that more and more of the BPC seminars were about programming and unit testing. People were even talking about Test Driven Development (TDD) against SharePoint!

What this indicates is that organizations are starting to treat SharePoint seriously as a development platform. This has always held potential but required a steep learning curve. For example, at the Best Practices Conference last year there was very little on these sorts of developer-centric practices. This year it was all about programming against SharePoint in the traditional way - using unit tests, mocking, web smoke tests, and OO patterns.

There hasn't been a killer app for SharePoint yet but it's coming.

Best Practices For Centrally Governing Your Portal and Governance

On Wednesday morning I gave a presentation on helpful tips for centrally managing a portal. I showed a governance site collection I have been working on and talked about how it can be used to make it intuitive and easy to run a portal.

I'm including my powerpoint presentation here.

There are no screenshots of the governance site collection yet although I am uploading a couple as part of this post.

Hope this helps!

Actions:

Saturday, January 17, 2009

Hello, New York!

Next week I'll be exhibiting echoTechnology's latest version of echo for SharePoint in New York City, at the SharePoint IMAGINE 2009 conference.

The conference is billed as "SharePoint in the Real World". Its focus is on how businesses use SharePoint to solve their specific real world needs, and how SharePoint impacts their bottom line.

It's organized by Impact Management and is taking place at the Microsoft NY Center at 1290 Avenue of the Americas on Wednesday Jan 21 from 9 to 4. Attendance is free so if you are in the area you should definitely sign up...you can do that here.

I'll be in NYC from Tuesday evening to Thursday afternoon, attending the conference and meeting with a variety of echoTechnology partners - so my itinerary is very tight. Still, it's a great opportunity to return to NYC, where I lived for a short while.

Good Times...

I moved home right after the awful 9/11 and the Dot Com bubble burst, and this is my first chance to go back. Although I was only living in New York for a year, it left an indelible impression on me - as it would on anyone.

My first professional programming job was in 2000/2001 as a Java programmer. I took New Jersey-based IT training company SetFocus' intense but great 3-month Java Master Track, and was then placed with an online education company in New York City.

We were developing a web-based learning system using JSP and servlets, in a cramped little office downtown on the corner of Church and Warren.

At first I lived in West Orange New Jersey with a small gang of roommates, and then we all moved to a small building in Astoria Queens. This was inhabited by a porn star, an aspiring model, an unkillable cockroach (we learned to live and let live), and a foul-mouthed talking parrot named Sammy (not necessarily in the same apartment).

Some of my favourite memories are: exploring the parks and trails on the edge of Battery Park City; bar crawling with my roommates and friends; celebrity spotting; conniving my way into an amazing hidden club called Light, marked only by bouncers and a lit white window and way too cool for me; eating lunches on the steps of Federal Hall in Wall Street and exploring Manhattan on foot during lunch hours; and wandering the streets of Brooklyn Heights.

...Followed By Taxes

Living there was also an exercise in paperwork. Due to my particular circumstances I paid 7 levels of taxes at various times that year:

- New Jersey state income tax

- New York state income tax

- Connecticut state income tax

- New York city sales tax (anytime I bought something)

- US Federal income tax

- Canadian Federal income tax (although I was out of country, as a Canadian even death can't cut short your obligation to pay taxes); and

- Ontario provincial income tax

To this day the state of Connecticut faithfully sends me an annual update on my pension plan, which currently sits at 13 cents a year. I'm sure just mailing me costs more than that! Luckily they report that they are investing great time and attention to increase the yield, so when I retire in 30 years, my pension will be at 14 cents and I can finally buy that tropical island.

Anyway, if you're in the area and want to say hi, drop me a line!

Actions:

Saturday, November 08, 2008

DevConnections in Las Vegas Next Week

I'll be in Las Vegas this week for the DevConnections / SharePoint Connections conference from Monday to Thursday, hosted at the Mandalay Bay resort and casino.

echoTechnology will have a booth set up so if you're around drop by the booth and please say hello!

As always I'll try to attend as many of the sessions as I can and blog about anything that might be helpful. And try to not to blow all my money on blackjack...

Speaking of events, and on a somewhat unrelated note, LinkedIn has started releasing 3rd party applications on its platform. At the moment there are eight apps including

- Box.net - to store and share files

- BlogLink - by SixApart, showing your blog posting and sharing your contact's

- Slideshare Presentations and Google Presentations - to showcase and share powerpoint slides

- Reading List by Amazon - to share your reading list with your contacts.

- My Travel - a neat app to share the events you'll be attending

Similar to the My Travel application, LinkedIn also added Events, which is a searchable directory of industry events, and the reason I'm mentioning LinkedIn in this post. DevConnections is listed and I've signed up as attending.

I love LinkedIn, and I'm glad they're improving their offering with utilities that genuinely add value and make the network seem more useful and personal. You can get a much better sense of who your connections are when you see their blog posts and share trip itineraries.

Actions:

Saturday, October 04, 2008

Amazon EC2 To Support Windows OS

Great news from the folks at Amazon Web Services - their EC2 Cloud computing platform will shortly support Windows operating systems. This is a wonderful development as it will allow .NET applications to be hosted with IIS at the same level of scalability as the Linux folks now enjoy. I've signed up so when the first beta comes out, I have a fighting chance to test it.

Pricing will be higher for Windows OS than for Linux of course but with the advances in virtualization these days I doubt it will be a huge difference. Of course what you are paying for is the extra services of (presumably) professional backup, redundant servers, locked down security, onsite engineers, and all the bells and whistles a massive data centre run by one of the world's largest e-tailers will provide.

I'm a keen fan of Amazon's work - I think of all the major players they are the ones who understand the new economics of the internet the best - yes even better than Google I would argue. They are commoditizing application development in a granular and sustainable way.

Of course Google's revenue stream is huge. Of course they have made major successes by providing services such as online office apps, email, maps, analytics, adsense and adwords, but at the end of the day their revenue is entirely search-based and it isn't clear what level of support any of these additional services are likely to receive over time. Right now it doesn't matter that all of these services are "free" and "Beta", because they are intended to fuel Google's search revenue. However, that assumes that Google will remain the #1 search destination. If it doesn't, all of these services will have to be dropped or given some kind of business model. In my opinion, anyone building on these services is therefore taking a bit of a chance.

At the bottom of any software ecosystem, the founder is essentially bullet-proof. That's because anybody using the platform faces the switching cost to another ecosystem, and also because the more services are offered, the more compelling staying on the platform becomes.

What Amazon is quietly doing is encouraging everyone to build on their platform, but charging them for this. By doing so, they are making a sort of business guarantee - they get a revenue stream for each of their services, and in turn they can support it with Service Level Agreements and dedicated teams. In other words, there is a vision and a road map because Amazon's web services each raise money.

Recent improvements to Amazon's DevPay - which provides an easy way for developers to charge for their software - and their work on the super-scalable SimpleDB are proof that they are in this for the long haul.

At the end of the day, developing on any cloud computing platform isn't just a technical challenge. It raises a lot of thorny questions - how do you protect your data, what kind of legal issues accrue, what are the privacy issues, how do you handle service level agreements, how do you pass on your costs - just to name a few. Cloud computing is not a silver bullet and anybody committing to it needs to take a deep breath before they do, and research and plan ahead.

However, the commoditization of computing resources represents the same impact as the introduction of the telephone line or automobile factory - after awhile you forget they are there and just RELY on them. Seen this way, a future timeline of technological progress might include the names, "Thomas Edison", "Henry Ford", and..."Jeff Bezos".

What do you think?

Actions:

Tuesday, September 23, 2008

SharePoint Best Practices Conference: Keynote Speech by Tom Rizzo

Tom Rizzo, Microsoft's Director of SharePoint, kicked off the SharePoint Best Practices conference with a funny and insightful keynote speech. He provided a view into SharePoint's current and future development, and reminded us of the various releases and support that are currently available for the platform.

Past, Present, and Future

Tom talked about SharePoint's humble origins in the Tahoe days, when it was teamed with Exchange. Now it's one of Microsoft's fastest growing server products ever. He joked that they are still trying to come up with a three-word description of it, given that as a platform and product it is "floor wax and dessert topping at the same time".

He also touched on the future of SharePoint. Key directions for the next version will include investing in the "mobile experience", making the phone "a key client for SharePoint moving forward".

Another major investment is already occurring in the area of online hosting. Microsoft is trying to leverage its online SharePoint hosting experience, which I've blogged about before, and make it easier in the next version for clients to decide between on-premises and dedicated or multi-tenanted hosting in the cloud.

He described how SharePoint currently relates to the x2008 versions of Microsoft's products. SP1 with slipstream install allows SharePoint to work on Windows Server 2008. It provides security enhancements (like a reduced attack surface by switching off non-critical services), performance gains, and full support for virtualization.

I'll mention that last point again - starting with SharePoint 2007 SP1 Microsoft now provides full support to SharePoint servers using VMWare! This is very important - every solution definition doc I ever wrote had to explain to clients that if anything went wrong with their VMWare SharePoint instances, Microsoft would only provide "best effort" support. This is very good news. Here's the official announcement.

SQL Server 2008 adds transparent data encryption if you want to completely lock down your data - even if someone gains access to your SharePoint database server, they can't get at your data without the encryption key. It also provides performance benefits, including a nifty little feature called File Streams that allow SQL to manage and "serve' large files (BLOBs) from a file system - basically a nice compromise between the advantages of file system storage vs database BLOB storage.

Finally, Visual Studio 2008 makes it much easier to build (and debug) Workflow Foundation workflows for SharePoint.

Tom's Top 10 Best Practices

- Capacity Planning isn't a suggestion! (Also, move to 64 bit because SharePoint vNext will be all 64bit)

- Know Thy User

- Know Thy Data

- Know Thy Developer - use asynchronous calls, watch out for web part connector blocking, inefficient code, do lots of stress testing

- To thine own self be true [Unfortunately I don't remember what he meant by this]

- Scale up or out > actually do both! Plan your architecture to keep these options open

- SharePoint is an Enterprise app - don't let the WYSIWYG and wizards fool you - this takes investment of time, people, and money

- Support boundaries protect you and us [meaning Microsoft] - only use OOB or make customizations knowing they may not be supported going forward

- Weeding and pruning makes a beautiful deployment - constantly manage your farm and its content

- We all love code but.... Out of Box is good!

Questions and Answers

Q: What is Microsoft's commitment to cross-browser rendering?

A: Tom mentioned enhanced Firefox and Opera support in SharePoint 2007, as well as decreased use of ActiveX controls. This trend will continue . Mentioned browser on mobile phones is an increased priority. Partnership with Telerik makes Rich Text Editor more accessible / cross browser.

Q: What about Dynamics CR support with MOSS, without using the BDC?

A: Dynamics CRM was released after SharePoint so SharePoint couldn't integrate fully with it [Although the reverse must have been possible?]. Going forward better coupling between Dynamics CRM and SharePoint vNext will occur.

Q: What about Accessibility Standards?

A: Improvements will be made in the product going forward. Tom cited the work with HiSoft on the Accessibility Kit as an example. [Personal comment - I don't believe this is going to ever be fully resolved as long as maximum UI "functionality" is a priority- case in point the increased use of Silverlight and Ajax will likely make this a non-starter. I believe this can only be achieved with an MVC-style architecture that would service multiple clients, and this will likely represent a massive rearchitecture which I doubt will happen. For the current state of play see my previous post on accessibility in SharePoint.]

Q: What's going to happen with the Knowledge Network add-on? [Which automatically provided enhanced skill and people information in SharePoint based on email conversations]?

A: We liked the Knowledge Network so much we want to include it as a core feature in SharePoint vNext.

Actions: